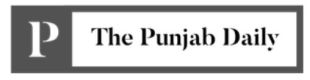

Software in making! Control Smartphone with eyes

Tech lovers it time to twiddle thumbs again. Keep moving your eyes as you stare at your Smartphone, and watch it work. Software is already being developed and this will help you control your Smartphone with the help of your eye movements.

International researchers are wracking their “technology” brains again, and the team also has a graduate student of Indian origin. You can now get prepared to open apps, play games and do a lot more, by blinking your eyes or moving them as the researchers are developing software that responds to eye movements. The Smartphone will be responding to the movement of the eyes confirm the group of researchers.

The research team working on this software hails from the, Massachusetts Institute of Technology (MIT), University of Georgia and Max Planck Institute for Informatics, from Germany. The MIT Technology Review reported that the “team has so far been able to train software to identify where a person is looking with an accuracy of about a centimeter on a mobile phone and 1.7 centimetres on a tablet.” Aditya Khosla from MIT is a co-author of this study and he has confirmed that more data will improve the accuracy of the system.

The researcher team, anxious to see how the people stare into their Smartphones outside the lab, has developed GazeCapture, an app, for this purpose. This app gathers data about the way people gaze at their Smartphones in different environments. The phone’s front camera is used to record the user’s gaze in the form of “pulsating dots on the screen of a Smartphone”. Reports state that “To make sure they were paying attention, they were then shown a dot with an “L” or “R” inside it, and they had to tap the left or ride side of the screen in response.”

The information gathered through GazeCapture was utilized to provide training to a software called iTracker. This software is also compatible on an iPhone. The camera of the handset captures the face of the user, and the iTracker marks the direction and position of the head of the user and then notes the eyes to see where the user is staring on screen.

the face of the user, and the iTracker marks the direction and position of the head of the user and then notes the eyes to see where the user is staring on screen.

Nearly 1,500 people have already tried the GazeCapture app and this was confirmed by Khosla . The target of the researchers is to get 10,000 people to stare at the screens so that the error rate of the iTracker can be reduced to “half a centimeter”. The results of this research study were presented at the “IEEE Conference on Computer Vision and Pattern Recognition in Seattle, Washington”. Khosla says that on future this software can also be used to diagnose conditions like “concussions and schizophrenia”.